To truly learn about long-term user motivation, learn how to understand analytics

Three simple steps to get started using analytics with user research

Analytics are a powerful tool that designers often overlook, but it can be an excellent source for understanding user's long-term usage, motivation, and goals.

In fact, it recently played a significant role in user research for a recent project. The application's usability wasn't the primary research question but about long-term user motivations and learnability.

In other words, we cared more about our users' 2nd login (when we weren't there to guide them) than their 1st (when they used the site with us). As a result, I relied heavily on Analytics to track user actions and determine if users were motivated to learn new concepts and revisit the site.

If you find yourself needing to track long-term usage or motivation in your project, here is a 3-step process for Designers to get started understanding Analytics:

Define what you're trying to learn in simple terms

Figure out what metrics to prioritize based on that

Figure out how to pair it with qualitative research

But first, let's talk about why you can't rely on a simple usability test for these cases.

Behavioral Analytics help you see what users do in the long term

User tests always, unfortunately, have an element of bias in them simply because you're there with them.

While many users would beperfectlywilling to struggle for 5 minutes when you're around to figure out a task, that same user would likely abandon the website in 30 seconds otherwise.

This is why simply asking about long-term usage is not that helpful. Is a question like "Do you envision using this product long-term?" really going to capture what they'd do accurately? Only partially. There are tons of fitness and diet applications users have said they'd use, only to abandon them later.

In my project, the user's 1st login was all about setting up the product to collect data. Only after 24 hours, when data came in, could users tell us whether this was valuable. So users couldn't tell us anything long-term at the end of the 1st session.

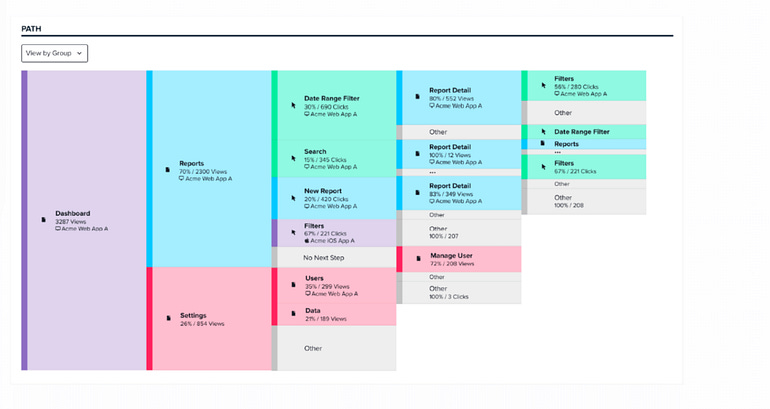

This is where behavioral Analytics shines. It's a way to monitor user activities outside the controlled test environment and see what users do when you're not around.

When your primary research question concerns longer-term goals (like user engagement), Analytics is one of the easiest (and only) ways to track this.

Tools like Pendo.io, which focus primarily on user interactions and engagement, have become more common than more complex analytics (like Google Analytics), making it much easier to start with.

However, I still know that Analytics can be intimidating, especially to Designers who have never touched data. So, let's go through each step of the process I outlined to understand how to use Analytics.

Define your objectives or risk being overwhelmed

Here's something you might have yet to consider: As a Designer, you are (usually) not the target audience for Analytics. It's instead meant for Business and Data Analysts, Data Engineers, and other roles trained to derive meaningful insights from the wealth of data available.

As a result, the first time you log into Analytics software, you may be overwhelmed by the sheer amount of information and options.

That's why one of the most critical things Designers need to do before opening up Analytics software is figure out what you're hoping to learn (and also get an account from your organization).

If you don't know what you're looking for, it's easy to get lost: even trained Data Science professionals do this.

If you have created one, a user research plan is one of the best places to start. The research objectives you listed for that plan are often the big ideas you hope to capture, which can help you clarify what you're looking for.

If you don't have a research plan (and you really should), consider thinking of some 'behavior-based' questions you might want to know. You don't have to know the exact terminology; think broadly about what you want to learn.

For example, in my research about long-term user motivation, here would be some questions I would want the Analytics to answer:

How many users log in for a 2nd/3rd/4th time?

What features are used the most (or least)?

When are the busy times when users log in?

Which pages do users spend the majority of their time on?

Once you've thought of some basic questions you might want Analytics to answer, you're ready for the next step.

Understand the UX Goals (& metrics) you'd like to find

If there's one acronym you should remember when using analytics, it's HEART. It is an acronym created by Google researchers that captures the five metrics most UX professionals want to capture: Happiness, Engagement, Adoption, Retention, and Task Completion.

I've discussed 3 of them (Engagement, Adoption, and Retention) in a previous article, but let's see how choosing one of the UX metrics might result in different questions being prioritized.

In our example, imagine we're concerned about user motivation. Here are the questions you might raise based on what you choose:

Happiness: What features/pages are users most satisfied/dissatisfied with? What motivates them to take actions beyond the minimum (like giving additional feedback)?

Engagement: What motivates users to log in a second time or more? What features do users get the most value out of, or what 'signals' exist to suggest that someone will become a long-term user?

Adoption: What motivates users to search for your product in the first place (i.e., what problems are they looking to solve)? Why do they choose your product over your competitors?

Retention: What motivates users to become long-term customers or power users? If we have a freemium product, what motivates them to start paying for it?

Task Completion: What are users often trying to achieve, and can they achieve it? Are there difficulties around task completion that may demotivate users from continuing to use our product?

As you can see, the broad "user motivation" category can become much more focused, depending on which Goal and metric you choose. However, this isn't just a choice you should make for yourself: often, you can use a business' North Star Metric to help.

This is a metric that your business prioritizes above all else for all of its decision-making. This is because choices rarely improve metrics across the board: one choice may improve some metrics while decreasing others.

You are almost assuredly on the right track if you align your UX metric with the businesses' North Star Metric. To give you a quick reference of some metrics for each category:

Happiness: Net Promoter Score (NPS), Customer Satisfaction Score (CSAT), App rating (i.e., 1–5 stars on Google Play, Amazon, etc.)

Engagement: Visits per user, Average Session time, Conversion Rate, Feature, "Time to first use" (i.e., How long it takes users to use a key feature)

Adoption: Install Rate, New Users, Daily Active Users (DAU)

Retention: Repeat users/buyers, subscription rate, Daily/Weekly/Monthly Active users (DAU/WAU/MAU)

Task Completion: Error rate, Dropoff Rate (individual task), Churn rate (entire site)

I elaborate on this topic further in my book, but for this article, let's follow up with another crucial aspect: pairing this with qualitative feedback.

Balancing Analytics with qualitative insights

Contrary to popular belief, analytics can't answer every question: tons of failed companies tried to rely on metrics to guide them instead of user research.

While they can indicate how many users clicked on a button or the duration spent on a page, they can't explain the "why" behind those actions. This is why there's often a qualitative element in user research.

Once you've identified the metrics you want to monitor, you need to figure out how to integrate them with your qualitative-based research method.

For example, in my project, I employed remote, unmoderated tests with follow-up questions (and Analytics) to understand what a user did, how they felt at the time, and more. I would then use that data to choose specific interview questions to ask about at a followup user interview, where I got their impressions.

Doing this allowed me to see where they struggled (or spent the most time) on their capture, how they felt then, and ask about it later.

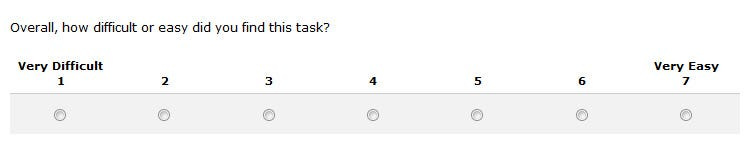

For example, suppose users spent significant time on a page and rated a task as "Very difficult." That might indicate a challenging task, which I could get more feedback about in the user interview.

This method only added a little burden to the user, having random pop-up questions while using the application and a user interview afterward. This was a lot of data on the user research side, but it wasn't too much additional effort because I narrowed down exactly what I was looking for. This is what Analytics can offer you.

The Collaborative Power of Analytics

Getting started with Analytics for user research was more straightforward than anticipated; the higher-ups were incredibly supportive.

I had one conversation with the Product Owner, where I explained that I wanted to look at Analytics to guide user research, and then they were totally on board.

The reason is that many businesses value data-driven insights. So, when you offer to pair user research with these business metrics they're familiar with, it helps them understand user research and shows the value of user research.

If you ever run into use cases mainly concerned with long-term usage and user motivation, consider pairing your user research with analytics. You may discover that answers to your questions exist in data you've yet to explore.

Kai Wong is a Senior Product Designer and Data and Design newsletter writer. His book, Data-Informed UX Design, provides 21 small changes you can make to your design process to leverage the power of data and design.