Create growth experiments to persuade teams to invest in user-requested features

How to persuade teams to invest in new features requested by users

I learned to appreciate Growth Experiments when I convinced my team to finally build a new feature users constantly requested.

In an age of tight UX budgets, I’ve found that specific types of user feedback can fall flat. Your team is probably super interested in fixing problems and usability issues, but they’re often much less interested in user feature requests.

Some of this may be due to a lack of resources or other priorities. Still, I’ve also run into another main reason: investing many resources based on qualitative research alone is hard. After all, you only tested with a few people: how do you know others will also want the same suggestion?

This is where growth experiments can help: making a case with solid evidence and data to make a user suggestion a reality. Here’s how it works.

Growth Experiments and how they can augment user research

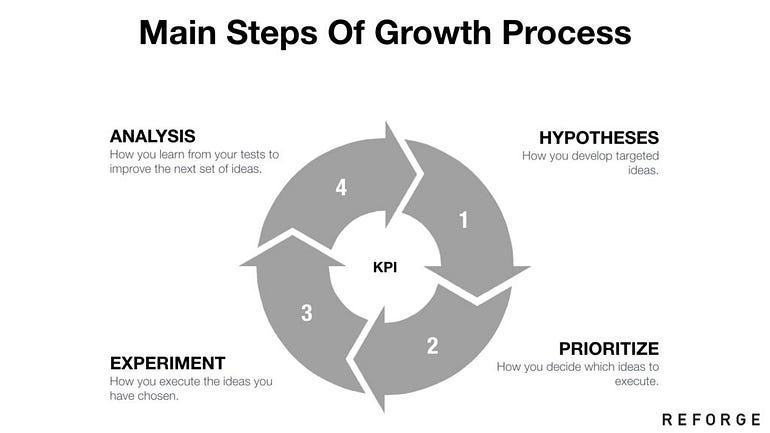

At its core, a Growth Experiment consists of 5 steps:

Analyze User/Data Insights to identify customer problems

Attach problems to clear outcome metrics like Monthly Active Users (MAU)

Ideate on design solutions

Create hypotheses on how specific solutions can impact an outcome metric and by how much

Execute scientific tests to prove/disprove hypotheses

Growth experiments are often considered part of a separate job field called Growth Design, which I’ve previously discussed. However, there’s one place where this field often intersects with your typical Design process: User research.

When conducting user research, you don’t just hear the user’s impressions on the tasks you’ve given them; you often learn more about their motivations, job responsibilities, frustrations, and more.

While you might only be testing one part of the application, this is often when the user might vent to you about the application as a whole and what they most often struggle with.

For example, you might be testing a page accessible by top-level navigation, but the user might spend a lot of time telling you how much the home page sucks and how it’s always so difficult to figure out how to get somewhere.

What used to suck, though, was the sinking realization that I didn’t have the power to do anything about it. I was only supposed to be gathering feedback on a specific page, and all that feedback would be likely ignored.

That changed when I learned about Growth Experiments. Suddenly, if I constantly heard feedback on some specific user request or feature, I had a way to build a proposal to get my team to act on it. Here’s how to do that.

Making a [Business] Case for Your Growth Experiment

The first thing that you need to do when starting a Growth Experiment is to gather additional evidence. This is because while you’ve found the user need, through research, to do something, you need also to find the business need.

I learned during this process that the team is often aware of an issue: for example, they know that the home page sucks. However, in their mind, it’s a lower priority than other features. To convince them otherwise, you need to gather additional evidence, such as:

Competitive analysis

Customer surveys or other formal user feedback

Customer support tickets or feature requests

User research (or best practices) from other organizations

Industry standards or benchmarks

Other industry data, such as case studies, market research reports, or academic research

We’re trying to achieve three main things when we look for additional evidence.

The first is to verify that we’re not mistaken: it could be that when you dig into additional data, what users say is top priority isn’t. After all, we know that what users say often doesn’t match what users do.

The second is to check for any metrics the business cares about based on this data. For example, what metrics are being impacted if we can find additional evidence on how much our homepage sucks? Are these metrics top priority for businesses?

Lastly, we want to see if enough clear evidence correlates user behavior and these metrics. This last reason is crucial because it often ties to the next step of the process: defining a hypothesis.

Hypotheses provide constraints for deciding what to test

The core of your growth experiment proposal should be centered around a hypothesis, as it provides a limited scope of what you’re trying to achieve. To explain this, let me reference a hypothesis I use often in my Data-Informed Design book:

“If we do X, users will do Y because of Z, which will impact metrics A.”

The hypothesis provides a clear and powerful statement that will either be proven or disproven at the end of your experiment. By formatting your ask like this, you provide much more assurance than you realize.

One of the most essential things you provide with this hypothesis is a way to tie feature building, user behavior, and metrics together. The first half of the hypothesis is a glimpse into user behavior: we’re saying that we understand our users enough to predict what they’ll do and why if we build a feature.

How can we make such a bold claim? We heard some of this directly from their mouth during user testing. Others we might know due to user personas that we’ve built. In addition, the additional evidence we gathered could also provide some insights.

However, what’s equally interesting is the business case we make in the latter half. It’s sometimes difficult for teams to see how user behavior translates into metrics: after all, a few extra clicks for the user might not seem like much until you realize that you have millions of users (and it impacts the average time on the page).

By tying all these things together in one clear sentence, you’ve provided some insight (and hopefully a strong business case) for investing time and effort.

All you need to do now is talk about how you’d test it.

A/B Testing, the most common method of growth experiment

This is probably the trickiest part of a Growth Experiment proposal, but it’s simpler than you might realize.

While you don’t always have to use A/B testing for Growth Experiments, it’s often the most straightforward way of running one.

To run an A/B test, you essentially need four things:

Two design solutions to test

A key metric to measure to determine a winner

Defined parameters for the test (population, duration)

Enough traffic for statistical significance

The first two points shouldn’t be problematic for us at this stage. We can design solutions later; our hypothesis helped us determine our crucial metric.

The last two points are often the trickiest part, and I won’t delve too deep into the technical details. However, it essentially relies on our Designer’s intuition and the evidence we’ve gathered to define two questions:

How big of an impact do you expect this to be?

How much better does one option need to perform to be declared the winner?

For example, if all you’re doing is changing a single button on the homepage, is it reasonable to expect a 75% increase in traffic? Not really. A 10% increase in traffic might be more realistic if it’s a well-placed button.

Likewise, how much better does a particular option need to perform to be declared the winner? If option A results in a 10% increase in traffic, and option B results in a 10.01% increase, is that enough? Or would a 15% increase be enough?

Determining these things helps you determine the number of users you need for your A/B test, but remember that all you need is an estimate. As a result, this can be as simple as:

Estimating percentage values for each design

Putting the values into a sample-size calculator

Introducing those values to your team while stating that these values can be double-checked by Data professionals (like Data Scientists or Business Analysts)

After this, you’ll have everything you need to assemble a Growth Experiment proposal for your team.

Growth Experiments are an excellent framework for user requests

Just because you don’t have the job title of Growth Designer doesn’t mean you can’t leverage Growth Experiments to convince your team to build new features.

Growth Experiments is a framework that tackles one of your team’s biggest fears: if they build this feature, no one will use it. After all, your primary data source is only a few users from user testing.

However, by reinforcing your user research with additional evidence and providing a clear picture of the experiment, you are proposing a clearer ask of everything you need and what the business can expect.

As a result, your business is more receptive to these new ideas. So, if you’ve ever encountered a critical user insight you’re not sure your team will act on, try turning it into a Growth Experiment.

Doing so can persuade your team to try.

Kai Wong is a Senior Product Designer and Data and Design newsletter writer. His book, Data-Informed UX Design, provides 21 small changes you can make to your design process to leverage the power of data and design.